We used to call writing code by hand "manual." Then AI agents came along, and we called that "automated."

It's time to update the vocabulary.

Sitting with an agent, prompting it, watching it stumble, re-prompting, reviewing its output, fixing what it broke. That's not automation. That's the new manual.

You feel it most when the task is large and mechanical. Ask an agent to remove barrel imports across 6,000 files. It scans files one by one. Misses edge cases. Breaks imports. Retries. Hallucinates an API that doesn't exist. You end up babysitting it through something that should be brainless.

Agents are great at reasoning. They're not so great at doing the same thing correctly 6,000 times specially context window limits. And even if the agent did get every file right? Every file means reading the source, generating the replacement, verifying the output. That's thousands of LLM calls. Millions of tokens. At current API pricing, you could burn through hundreds of dollars on a single migration and still wait hours for it to finish.

For that, you need a different kind of automation. One level up.

Loading code sample...

Run this, then ask your agent the same question.

Instead of flailing through files, the agent now builds a codemod, a deterministic, compiler-aware transformation that scans the entire repo in seconds, rewrites every matching import correctly, and validates the result with tests.

The agent handles the thinking. The codemod handles the doing.

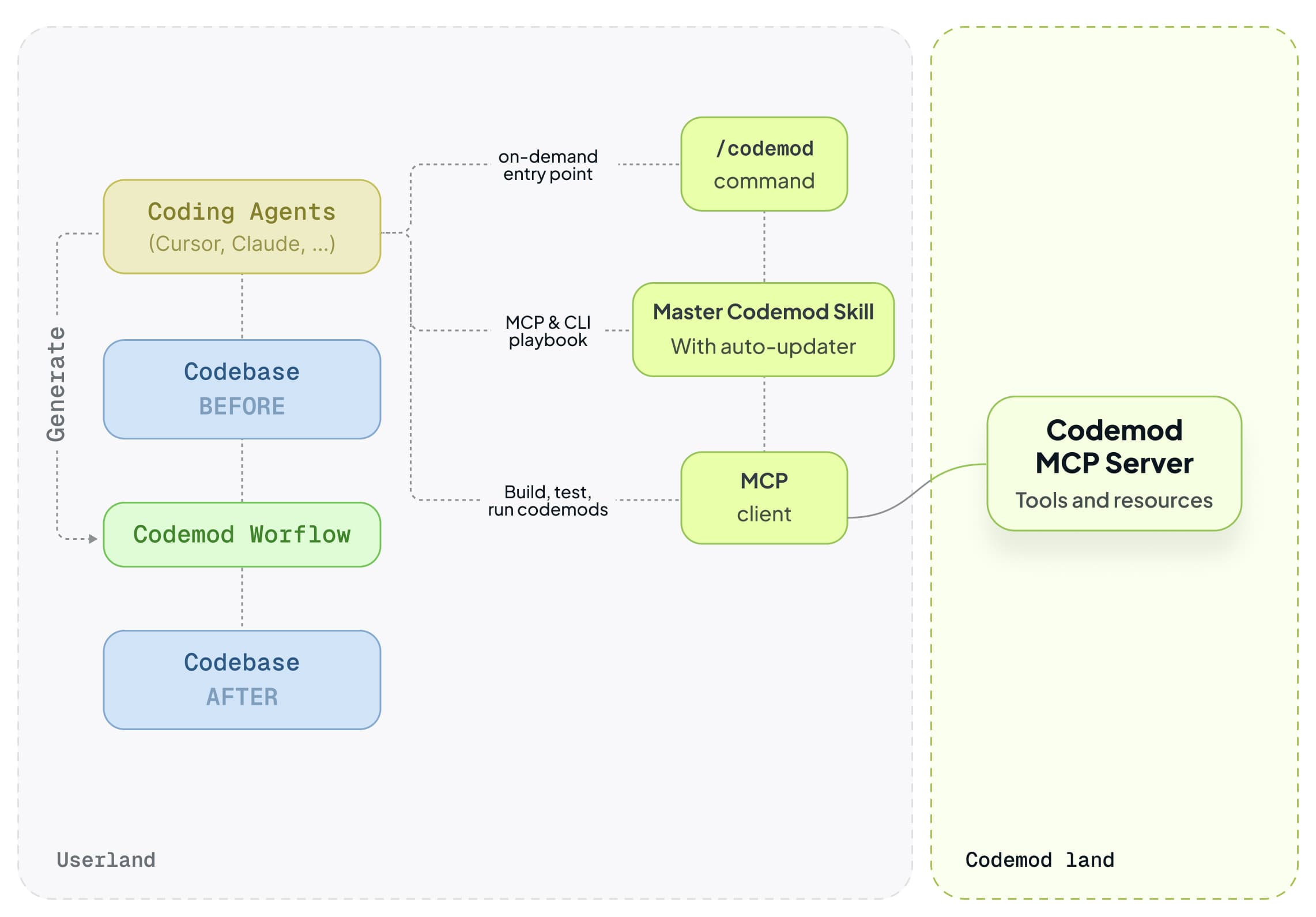

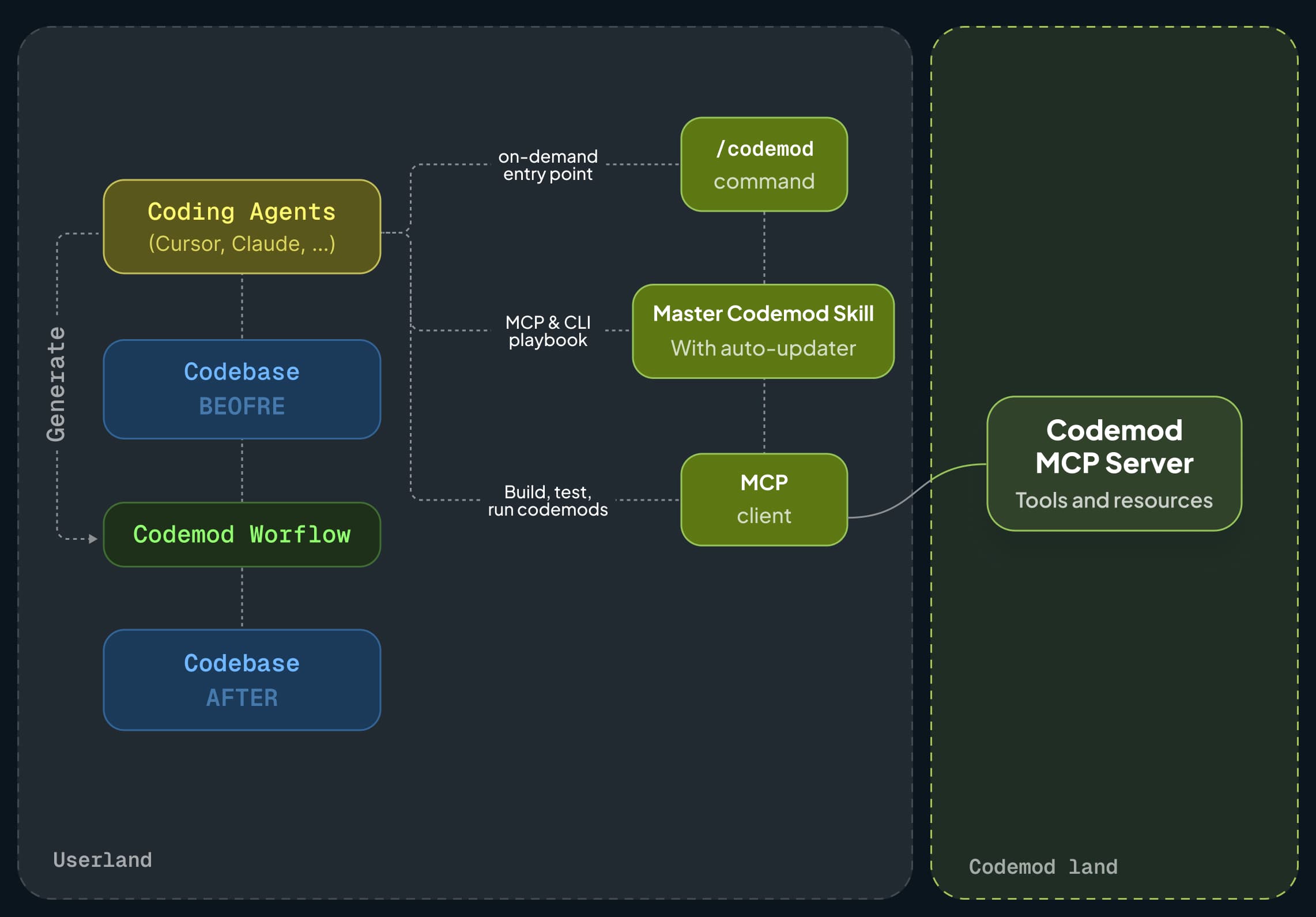

npx codemod ai is an upgrade to how your agent thinks about large-scale changes:

- A persistent skill. The agent learns when to reach for codemods instead of brute-forcing file edits. This becomes its default behavior for migrations, you don't have to remind it.

- MCP tools. AST dumps, tree-sitter node types, test execution, structured documentation. The agent pulls what it needs on demand instead of guessing from stale training data.

- A

/codemodcommand. An explicit entry point for creating, running, and iterating on codemods. No ambiguity about what to do. - Auto-updates. Skills and tools stay fresh. Your agent gets better at migrations over time without you doing anything.

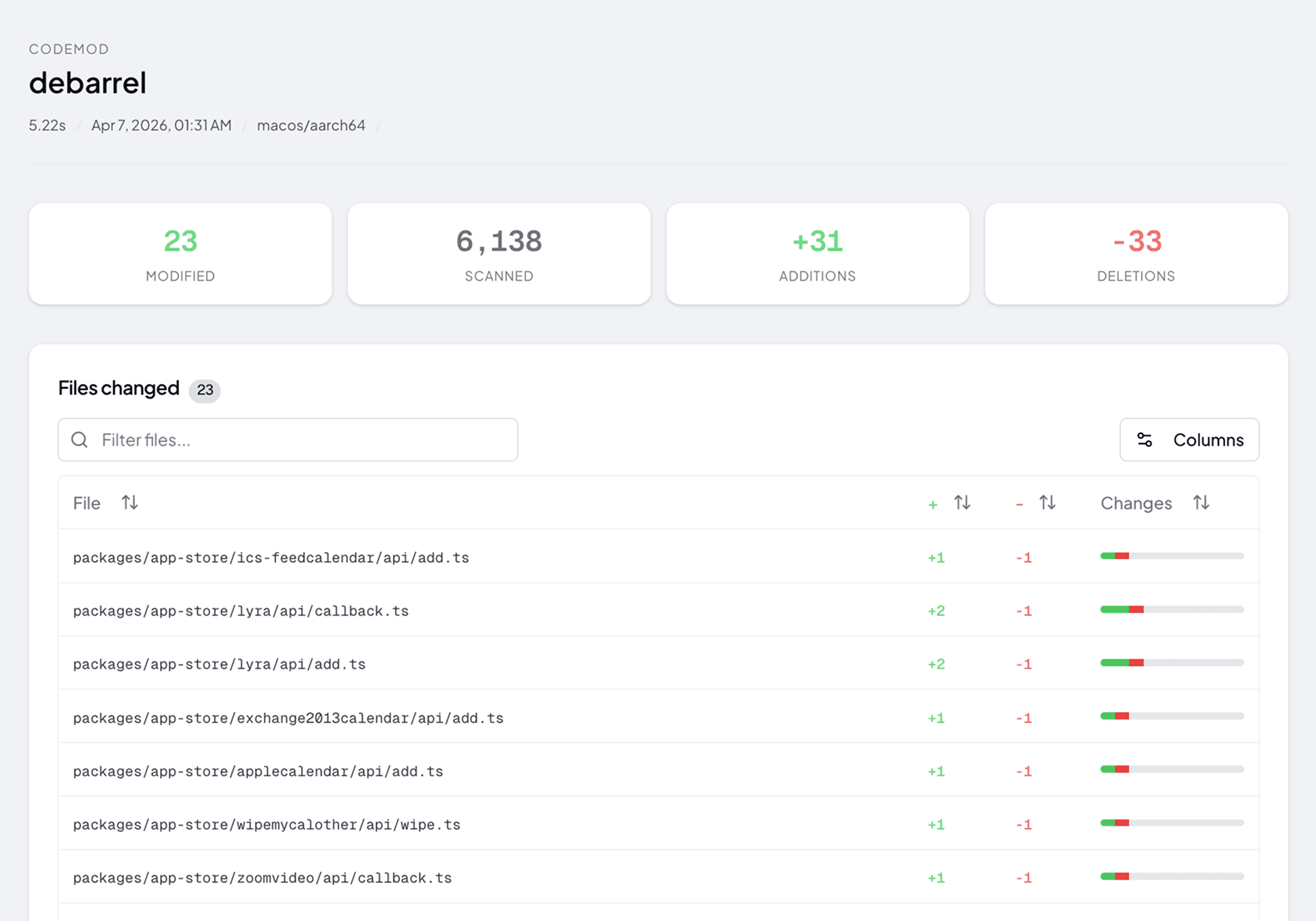

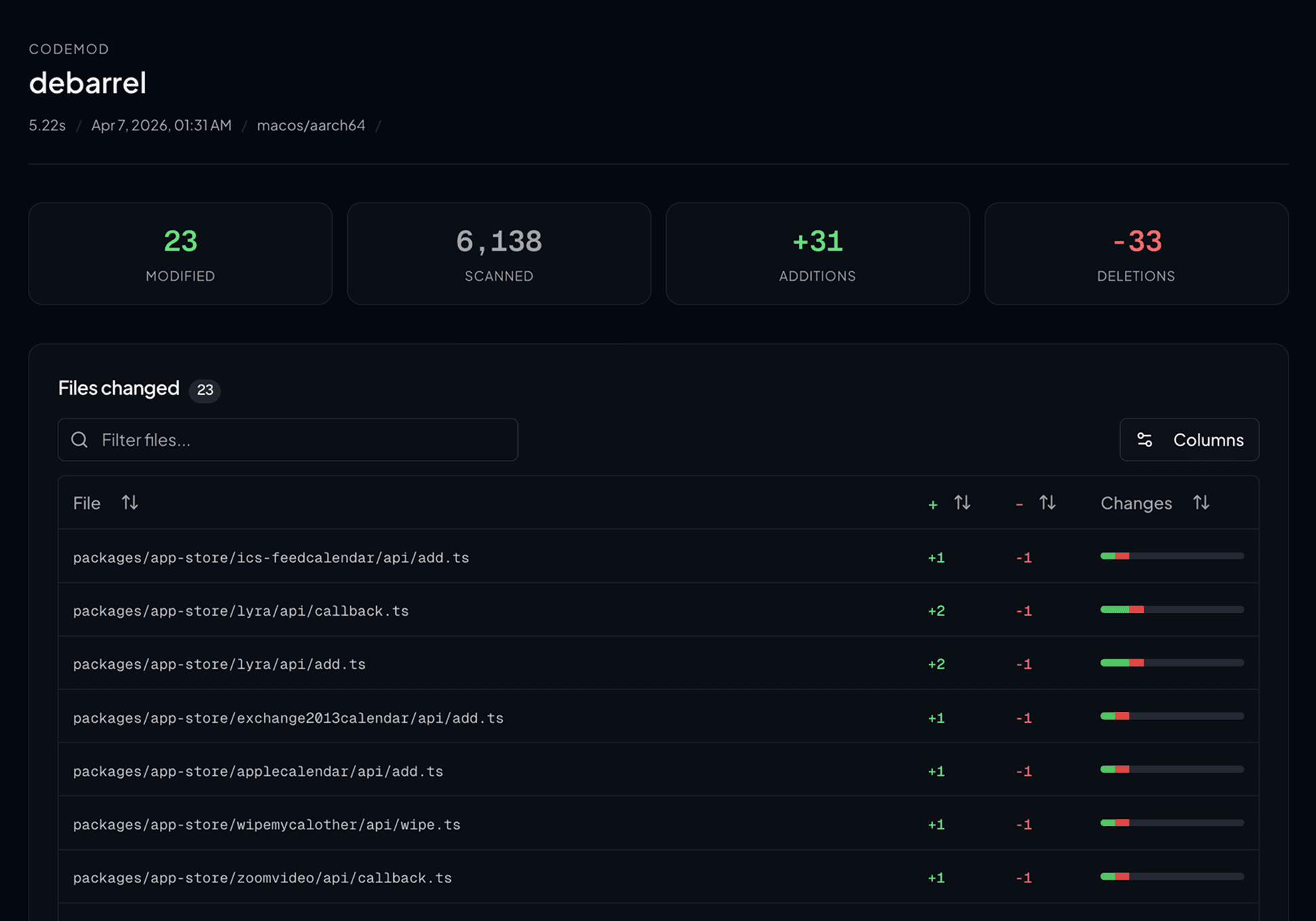

We wanted to debarrel a production monorepo. Barrel files, those index.ts files that re-export everything, are a well-known performance killer. They pull in entire module trees when you only need one export.

The fix sounds trivial:

Loading code sample...

Multiply that by thousands of files, add pnpm workspaces, tsconfig aliases, package.json export restrictions, and five different re-export patterns, and you've got yourself a weekend.

Or five seconds, if you have the right codemod.

Before writing a single line of codemod code, we ran:

Loading code sample...

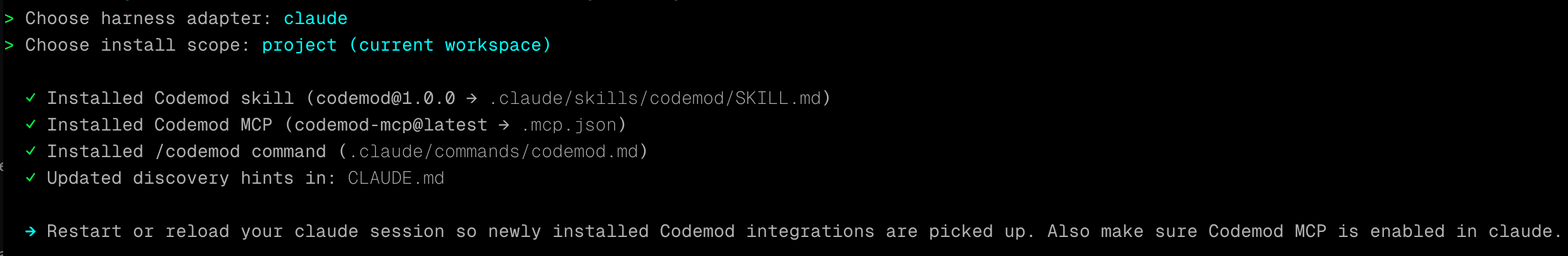

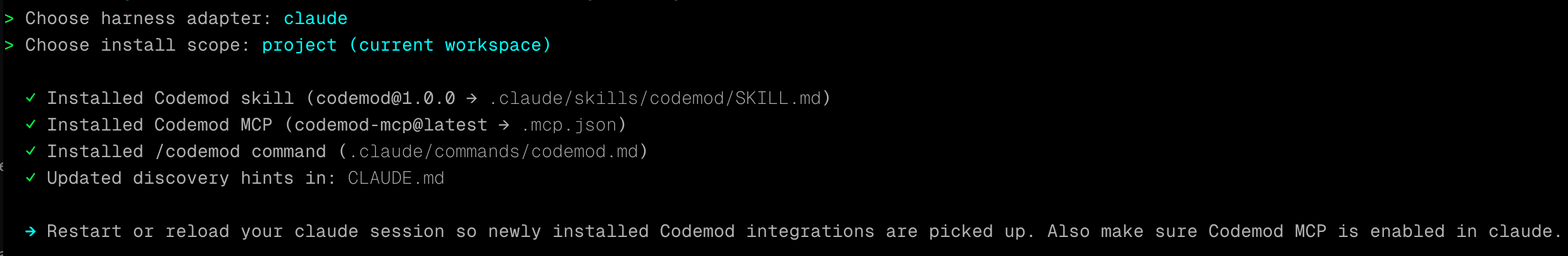

This one command transformed our Claude Code setup. It installed:

- A persistent skill into Claude's memory, teaching it how to plan codemods, structure transformations, and follow best practices. From this point on, when we ask Claude to do a large-scale change, it knows it should build a codemod instead of editing files one by one.

- MCP tools, AST inspection, tree-sitter node types, test execution, structured documentation. Claude can now introspect code at the compiler level and run codemod tests without us touching the terminal.

- A

/codemodcommand, so we (or Claude) can kick off the codemod workflow explicitly.

That was the setup. Took about 10 seconds.

We didn't write the codemod ourselves. We asked Claude to do it. And because it now had the codemod skill and tools, it didn't try to sed its way through 6,000 files. It built a proper codemod using JSSG (our native JavaScript runtime for ast-grep) with workspace-level semantic analysis.

The semantic analyzer (powered by oxlint's oxc_resolver) traces import { Button } from "./components" through the barrel file, finds export { Button } from "./Button", and rewrites the import to skip the barrel entirely.

The first version worked. On simple cases.

Real codebases are not simple cases. Here's what broke, and how the agent fixed each one, all within the codemod, not by hand-editing files.

Aliases that became filesystem paths. import { Button } from "~/components" is a tsconfig alias. The codemod was converting it to ../../src/components/Button. Technically correct. Practically useless, the project uses aliases for a reason.

Fix: preserve the original import prefix. ~/components becomes ~/components/Button, not a relative path.

The ../index gotcha. import { X } from "../index" was being rewritten to ../index/temp-keys. The path joiner treated index as a directory name instead of a file.

Five flavors of re-export. Barrels aren't just export { X } from "./X". They also do:

export { default as Modal } from "./Modal"import { calc } from "./calc"; export { calc }export * as MathOps from "./operations"export { internalFn as publicFn } from "./internal"

Each pattern needs different handling. The codemod detects and rewrites all of them, except namespace re-exports (export *), which it correctly leaves alone.

The agent didn't just fix issues. It wrote test cases for each one. The final codemod has 12 test suites, 58 assertions:

Test | What it proves |

| The happy path works |

| Different consumers get different rewrites |

|

|

|

|

| Import-then-export chains are followed |

|

|

| Partial debarrel when barrel has its own code |

| Files that aren't barrels aren't touched |

|

|

|

|

| Real pnpm workspace: |

The agent ran these after every change using the MCP's test tool. No terminal. No manual validation. Just red/green feedback in the conversation.

6,000+ files scanned in ~5 seconds. Every barrel import rewritten correctly. Every workspace boundary respected. Every alias preserved.

The codemod is published to the registry. Anyone can run it:

Loading code sample...

One command. No agent required. The full source code is on GitHub.

If you maintain a large codebase, migrations are not a one-time event. They're a constant. A new API version. A deprecated pattern. A framework upgrade. A performance fix that touches every file.

Agents alone can't handle this reliably. Scripts are brittle and disposable. Neither scales.

Codemods are the middle ground: deterministic where they must be, flexible where they should be, reusable across teams and repos.

npx codemod ai gives any agent, Cursor, Claude Code, OpenCode, Antigravity, this missing capability.

You absolutely can. jscodeshift has been the go-to codemod tool for a decade, and for good reason. It works. A huge number of production codemods have been written with it. If your transform is single-file and you know the API well, it's still a perfectly fine choice.

But there are a few reasons we think codemod ai + JSSG is a better default, especially when agents are doing the writing.

- Semantic analysis. jscodeshift processes one file at a time. It can't trace an import through a barrel into another module, you'd have to build that resolution layer yourself. JSSG supports workspace-level semantic analysis out of the box: definition lookup, cross-file reference tracking, and module resolution powered by oxc_resolver. That's what made the debarrel codemod possible in the first place.

- Not just JavaScript. jscodeshift is JS/TS only. JSSG is built on ast-grep and tree-sitter, which means the same tool and workflow works for Python, Rust, Go, Java, and any other language tree-sitter supports. One tool to learn, one system for your agent to use.

- A minimal API that agents can actually use. jscodeshift's API is large, loosely typed, and full of chained method calls that LLMs love to hallucinate. JSSG's API is small and close to the raw AST,

node.find(),node.findAll(),node.replace(),node.text(). Less surface area means fewer hallucinations and less time debugging phantom methods. - MCP tools that let the agent see the AST. This is the part that changes everything for agent-driven codemods. Through the Codemod MCP, the agent can dump the AST of any code snippet, browse tree-sitter node types, and run tests, all within the conversation. It doesn't have to guess what the AST looks like. It can look. That feedback loop is what makes agents reliable codemod authors instead of hopeful ones.

- Performance. Parsing and transforming 6,000+ files with a JavaScript-based parser takes real time. JSSG's engine uses tree-sitter for parsing and Rust for the semantic analyzer. Full workspace scan in seconds, not minutes.

- Actively maintained. jscodeshift's last meaningful update was years ago. The ecosystem has moved on, but the tool hasn't. JSSG is under active development, new features, bug fixes, and improvements ship regularly.

- A registry. When you build a codemod, you can publish it to the Codemod Registry and anyone can run it with a single

npx codemod <name>command. No cloning repos, no setup. The debarrel codemod we built in this post is already there. - A workflow engine. Real migrations are rarely a single transform. JSSG codemods can be composed into multi-step workflows with deterministic steps, AI-powered steps (for the cases where pattern matching isn't enough), and shared state between steps. You define the workflow in YAML, and the engine handles orchestration, sharding across PRs, and progress tracking.

- A platform beyond the CLI. Building the codemod is step one. For large organizations, you also need to know: how many files are affected? Which teams own them? How is the migration progressing? The Codemod Platform provides insights and metrics from semantic analysis across your repos, and cloud campaigns that break large migrations into team-scoped PRs, so you're not asking 15 teams to review a single 2,000-file PR.

jscodeshift was the right tool for its era. But the landscape has changed, codemods are increasingly agent-authored, polyglot, and workspace-aware. The tooling should reflect that.

Loading code sample...

Then ask your agent to do something it couldn't do well before.

You'll be able to generate codemods, test them automatically, apply changes at scale, and publish them to the Codemod Registry for your team or the community.

Follow the Quickstart docs here to learn more.

AI agents are great at thinking. Codemods are great at doing. npx codemod ai brings both together, so you can stop babysitting migrations and start shipping them.